Product Name

Product description goes here.

$0.00

0 in stock

` and `` in the embed code below:

```html

```

The embed code above can also be found on the **Stream** page of the Cloudflare dashboard.

[Go to **Videos**](https://dash.cloudflare.com/?to=/:account/stream/videos)

### Next steps

* [Secure your stream](https://developers.cloudflare.com/stream/viewing-videos/securing-your-stream/)

* [View live viewer counts](https://developers.cloudflare.com/stream/getting-analytics/live-viewer-count/)

## Accessibility considerations

To make your video content more accessible, include [captions](https://developers.cloudflare.com/stream/edit-videos/adding-captions/) and [high-quality audio recording](https://www.w3.org/WAI/media/av/av-content/).

---

title: Analytics · Cloudflare Stream docs

description: "Stream provides server-side analytics that can be used to:"

lastUpdated: 2025-09-09T16:21:39.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/stream/getting-analytics/

md: https://developers.cloudflare.com/stream/getting-analytics/index.md

---

Stream provides server-side analytics that can be used to:

* Identify most viewed video content in your app or platform.

* Identify where content is viewed from and when it is viewed.

* Understand which creators on your platform are publishing the most viewed content, and analyze trends.

You can access data on either:

* The Stream **Analytics** page of the Cloudflare dashboard.

[Go to **Analytics**](https://dash.cloudflare.com/?to=/:account/stream/analytics)

* The [GraphQL Analytics API](https://developers.cloudflare.com/stream/getting-analytics/fetching-bulk-analytics).

Users will need the **Analytics** permission to access analytics via Dash or GraphQL.

---

title: Manage videos · Cloudflare Stream docs

lastUpdated: 2024-08-22T17:44:03.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/stream/manage-video-library/

md: https://developers.cloudflare.com/stream/manage-video-library/index.md

---

---

title: Pricing · Cloudflare Stream docs

description: "Cloudflare Stream lets you broadcast, store, and deliver video

using a simple, unified API and simple pricing. Stream bills on two dimensions

only:"

lastUpdated: 2025-11-17T14:08:01.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/stream/pricing/

md: https://developers.cloudflare.com/stream/pricing/index.md

---

Media Transformations is now GA:

Billing for Media Transformations will begin on November 1st, 2025.

## Pricing for Stream

Cloudflare Stream lets you broadcast, store, and deliver video using a simple, unified API and simple pricing. Stream bills on two dimensions only:

* **Minutes of video stored:** the total duration of uploaded video and live recordings

* **Minutes of video delivered:** the total duration of video delivered to end users

On-demand and live video are billed the same way.

Ingress (sending your content to us) and encoding are always free. Bandwidth is already included in "video delivered" with no additional egress (traffic/bandwidth) fees.

### Minutes of video stored

Storage is a prepaid pricing dimension purchased in increments of $5 per 1,000 minutes stored, regardless of file size. You can check how much storage you have and how much you have used on the **Stream** page of the Cloudflare dashboard.

[Go to **Videos**](https://dash.cloudflare.com/?to=/:account/stream/videos)

Storage is consumed by:

* Original videos uploaded to your account

* Recordings of live broadcasts

* The reserved `maxDurationSeconds` for Direct Creator and TUS uploads which have not been completed. After these uploads are complete or the upload link expires, this reservation is released.

Storage is not consumed by:

* Videos in an unplayable or errored state

* Expired Direct Creator upload links

* Deleted videos

* Downloadable files generated for [MP4 Downloads](https://developers.cloudflare.com/stream/viewing-videos/download-videos/)

* Multiple quality levels that Stream generates for each uploaded original

Storage consumption is rounded up to the second of video duration; file size does not matter. Video stored in Stream does not incur additional storage fees from other storage products such as R2.

Note

If you run out of storage, you will not be able to upload new videos or start new live streams until you purchase more storage or delete videos.

Enterprise customers *may* continue to upload new content beyond their contracted quota without interruption.

### Minutes of video delivered

Delivery is a post-paid, usage-based pricing dimension billed at $1 per 1,000 minutes delivered. You can check how much delivery you have used on the **Billing** page or the Stream **Analytics** page of the Cloudflare dashboard.

[Go to **Billing** ](https://dash.cloudflare.com/?to=/:account/billing)[Go to **Analytics**](https://dash.cloudflare.com/?to=/:account/stream/analytics)

Delivery is counted for the following uses:

* Playback on the web or an app using [Stream's built-in player](https://developers.cloudflare.com/stream/viewing-videos/using-the-stream-player/) or the [HLS or DASH manifests](https://developers.cloudflare.com/stream/viewing-videos/using-own-player/)

* MP4 Downloads

* Simulcasting via SRT or RTMP live outputs

Delivery is counted by HTTP requests for video segments or parts of the MP4. Therefore:

* Client-side preloading and buffering is counted as billable delivery.

* Content played from client-side/browser cache is *not* billable, like a short looping video. Some mobile app player libraries do not cache HLS segments by default.

* MP4 Downloads are billed by percentage of the file delivered.

Minutes delivered for web playback (Stream Player, HLS, and DASH) are rounded to the *segment* length: for uploaded content, segments are four seconds. Live broadcast and recording segments are determined by the keyframe interval or GOP size of the original broadcast.

### Example scenarios

**Two people each watch thirty minutes of a video or live broadcast. How much would it cost?**

This will result in 60 minutes of Minutes Delivered usage (or $0.06). Stream bills on total minutes of video delivered across all users.

**I have a really large file. Does that cost more?**

The cost to store a video is based only on its duration, not its file size. If the file is within the [30GB max file size limitation](https://developers.cloudflare.com/stream/faq/#is-there-a-limit-to-the-amount-of-videos-i-can-upload), it will be accepted. Be sure to use an [upload method](https://developers.cloudflare.com/stream/uploading-videos/) like Upload from Link or TUS that handles large files well.

**If I make a Direct Creator Upload link with a maximum duration (`maxDurationSeconds`) of 600 seconds which expires in 1 hour, how is storage consumed?**

* Ten minutes (600 seconds) will be subtracted from your available storage immediately.

* If the link is unused in one hour, those 10 minutes will be released.

* If the creator link is used to upload a five minute video, when the video is uploaded and processed, the 10 minute reservation will be released and the true five minute duration of the file will be counted.

* If the creator link is used to upload a five minute video but it fails to encode, the video will be marked as errored, the reserved storage will be released, and no storage use will be counted.

**I am broadcasting live, but no one is watching. How much does that cost?**

A live broadcast with no viewers will cost $0 for minutes delivered, but the recording of the broadcast will count toward minutes of video stored.

If someone watches the recording, that will be counted as minutes of video delivered.

If the recording is deleted, the storage use will be released.

**I want to store and deliver millions of minutes a month. Do you have volume pricing?**

Yes, contact our [Sales Team](https://www.cloudflare.com/plans/enterprise/contact/).

## Pricing for Media Transformations

After November 1st, 2025, Media Transforamtions and Image Transformations will use the same subscriptions and usage metrics.

* Generating a still frame (single image) from a video counts as 1 transformation.

* Generating an optimized video or extracting audio counts as 1 transformation *per second of the output* content.

* Each unique transformation, as determined by input and unique combination of flags, is only billed once per calendar month.

* All Media and Image Transformations cost $0.50 per 1,000 monthly unique transformation operations, with a free monthly allocation of 5,000.

---

title: Stream API Reference · Cloudflare Stream docs

lastUpdated: 2024-12-16T22:33:26.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/stream/stream-api/

md: https://developers.cloudflare.com/stream/stream-api/index.md

---

---

title: Stream live video · Cloudflare Stream docs

description: Cloudflare Stream lets you or your users stream live video, and

play live video in your website or app, without managing and configuring any

of your own infrastructure.

lastUpdated: 2024-08-13T19:56:56.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/stream/stream-live/

md: https://developers.cloudflare.com/stream/stream-live/index.md

---

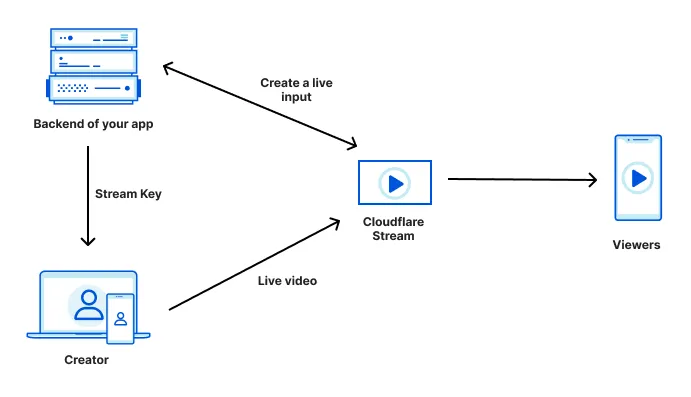

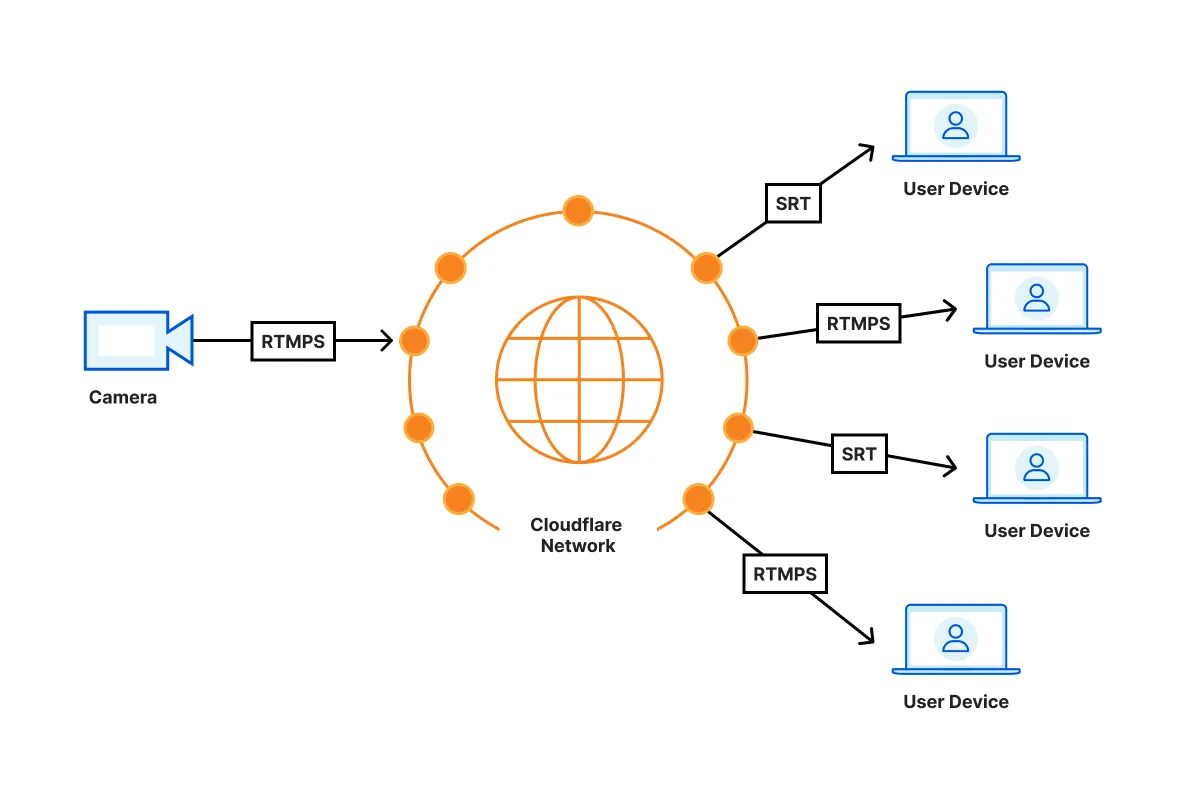

Cloudflare Stream lets you or your users [stream live video](https://www.cloudflare.com/learning/video/what-is-live-streaming/), and play live video in your website or app, without managing and configuring any of your own infrastructure.

## How Stream works

Stream handles video streaming end-to-end, from ingestion through delivery.

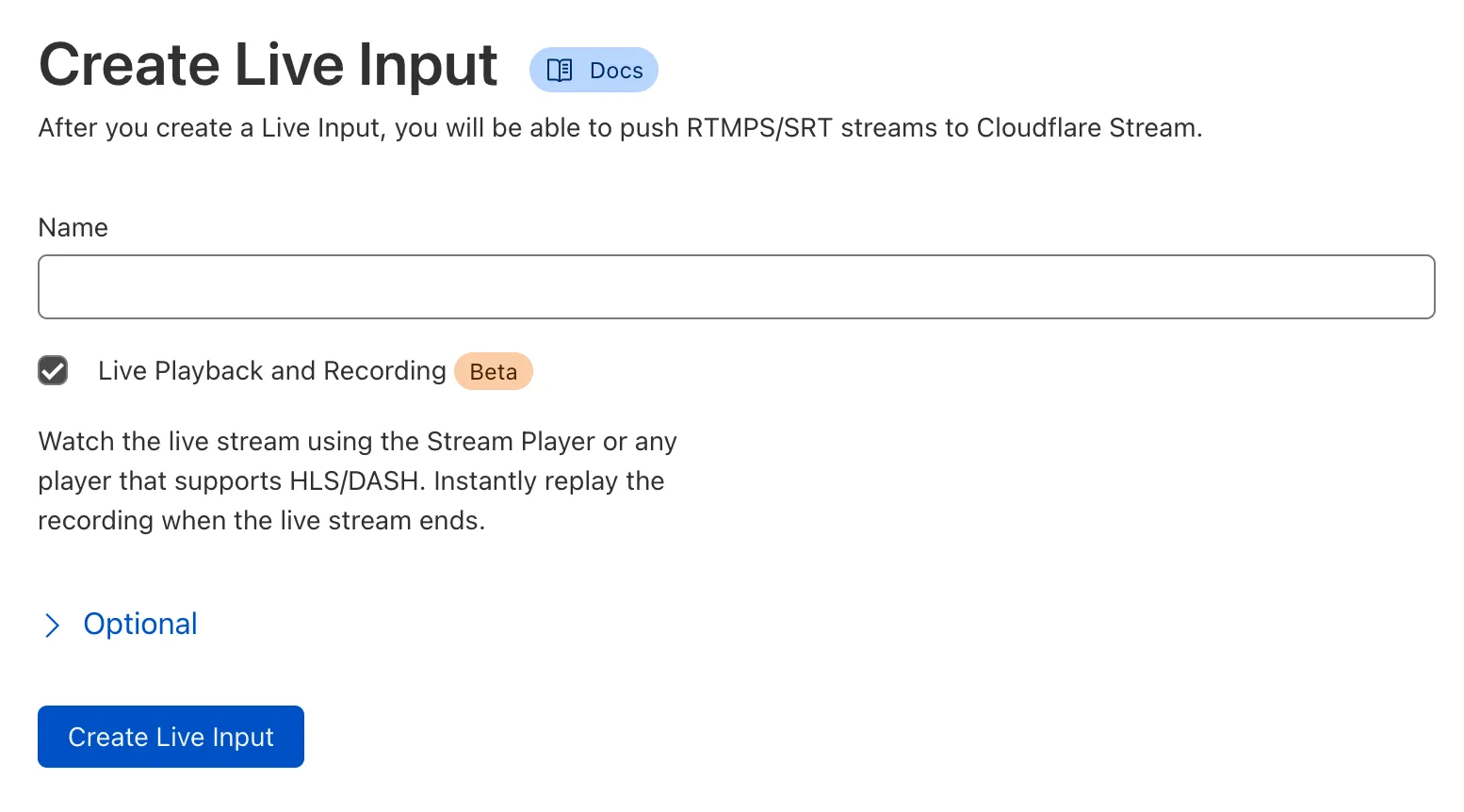

1. For each live stream, you create a unique live input, either using the Stream Dashboard or API.

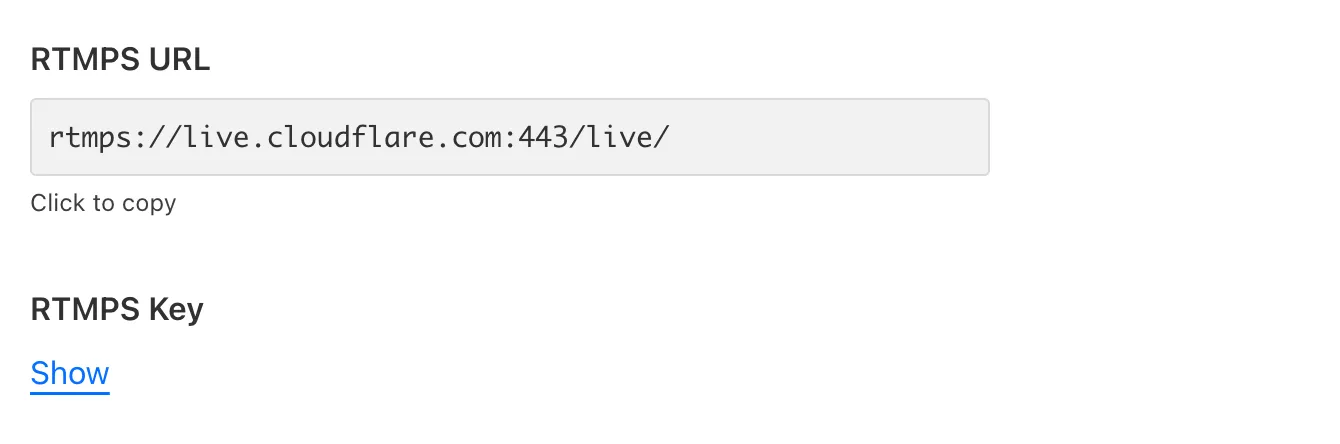

2. Each live input has a unique Stream Key, that you provide to the creator who is streaming live video.

3. Creators use this Stream Key to broadcast live video to Cloudflare Stream, over either RTMPS or SRT.

4. Cloudflare Stream encodes this live video at multiple resolutions and delivers it to viewers, using Cloudflare's Global Network. You can play video on your website using the [Stream Player](https://developers.cloudflare.com/stream/viewing-videos/using-the-stream-player/) or using [any video player that supports HLS or DASH](https://developers.cloudflare.com/stream/viewing-videos/using-own-player/).

## RTMP reconnections

As long as your streaming software reconnects, Stream Live will continue to ingest and stream your live video. Make sure the streaming software you use to push RTMP feeds automatically reconnects if the connection breaks. Some apps like OBS reconnect automatically while other apps like FFmpeg require custom configuration.

## Bitrate estimates at each quality level (bitrate ladder)

Cloudflare Stream transcodes and makes live streams available to viewers at multiple quality levels. This is commonly referred to as [Adaptive Bitrate Streaming (ABR)](https://www.cloudflare.com/learning/video/what-is-adaptive-bitrate-streaming).

With ABR, client video players need to be provided with estimates of how much bandwidth will be needed to play each quality level (ex: 1080p). Stream creates and updates these estimates dynamically by analyzing the bitrate of your users' live streams. This ensures that live video plays at the highest quality a viewer has adequate bandwidth to play, even in cases where the broadcaster's software or hardware provides incomplete or inaccurate information about the bitrate of their live content.

### How it works

If a live stream contains content with low visual complexity, like a slideshow presentation, the bandwidth estimates provided in the HLS and DASH manifests will be lower — a stream like this has a low bitrate and requires relatively little bandwidth, even at high resolution. This ensures that as many viewers as possible view the highest quality level.

Conversely, if a live stream contains content with high visual complexity, like live sports with motion and camera panning, the bandwidth estimates provided in the manifest will be higher — a stream like this has a high bitrate and requires more bandwidth. This ensures that viewers with inadequate bandwidth switch down to a lower quality level, and their playback does not buffer.

### How you benefit

If you're building a creator platform or any application where your end users create their own live streams, your end users likely use streaming software or hardware that you cannot control. In practice, these live streaming setups often send inaccurate or incomplete information about the bitrate of a given live stream, or are misconfigured by end users.

Stream adapts based on the live video that we actually receive, rather than blindly trusting the advertised bitrate. This means that even in cases where your end users' settings are less than ideal, client video players will still receive the most accurate bitrate estimates possible, ensuring the highest quality video playback for your viewers, while avoiding pushing configuration complexity back onto your users.

## Transition from live playback to a recording

Recordings are available for live streams within 60 seconds after a live stream ends.

You can check a video's status to determine if it's ready to view by making a [`GET` request to the `stream` endpoint](https://developers.cloudflare.com/stream/stream-live/watch-live-stream/#use-the-api) and viewing the `state` or by [using the Cloudflare dashboard](https://developers.cloudflare.com/stream/stream-live/watch-live-stream/#use-the-dashboard).

After the live stream ends, you can [replay live stream recordings](https://developers.cloudflare.com/stream/stream-live/replay-recordings/) in the `ready` state by using one of the playback URLs.

## Billing

Stream Live is billed identically to the rest of Cloudflare Stream.

* You pay $5 per 1000 minutes of recorded video.

* You pay $1 per 1000 minutes of delivered video.

All Stream Live videos are automatically recorded. There is no additional cost for encoding and packaging live videos.

---

title: Transform videos · Cloudflare Stream docs

description: You can optimize and manipulate videos stored outside of Cloudflare

Stream with Media Transformations. Transformed videos and images are served

from one of your zones on Cloudflare.

lastUpdated: 2026-01-29T11:44:10.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/stream/transform-videos/

md: https://developers.cloudflare.com/stream/transform-videos/index.md

---

Media Transformations is now GA:

Billing for Media Transformations will begin on November 1st, 2025.

You can optimize and manipulate videos stored *outside* of Cloudflare Stream with Media Transformations. Transformed videos and images are served from one of your zones on Cloudflare.

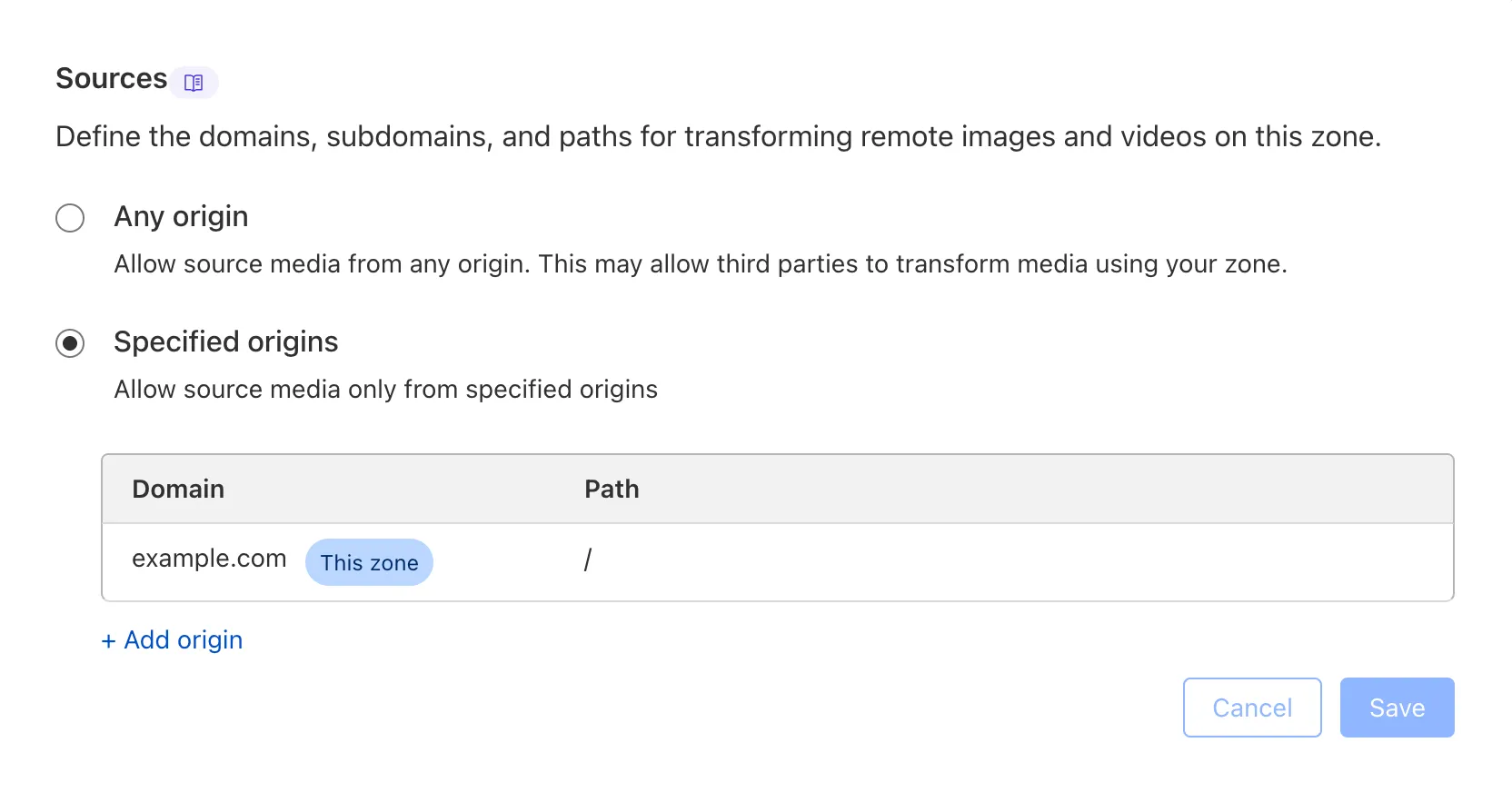

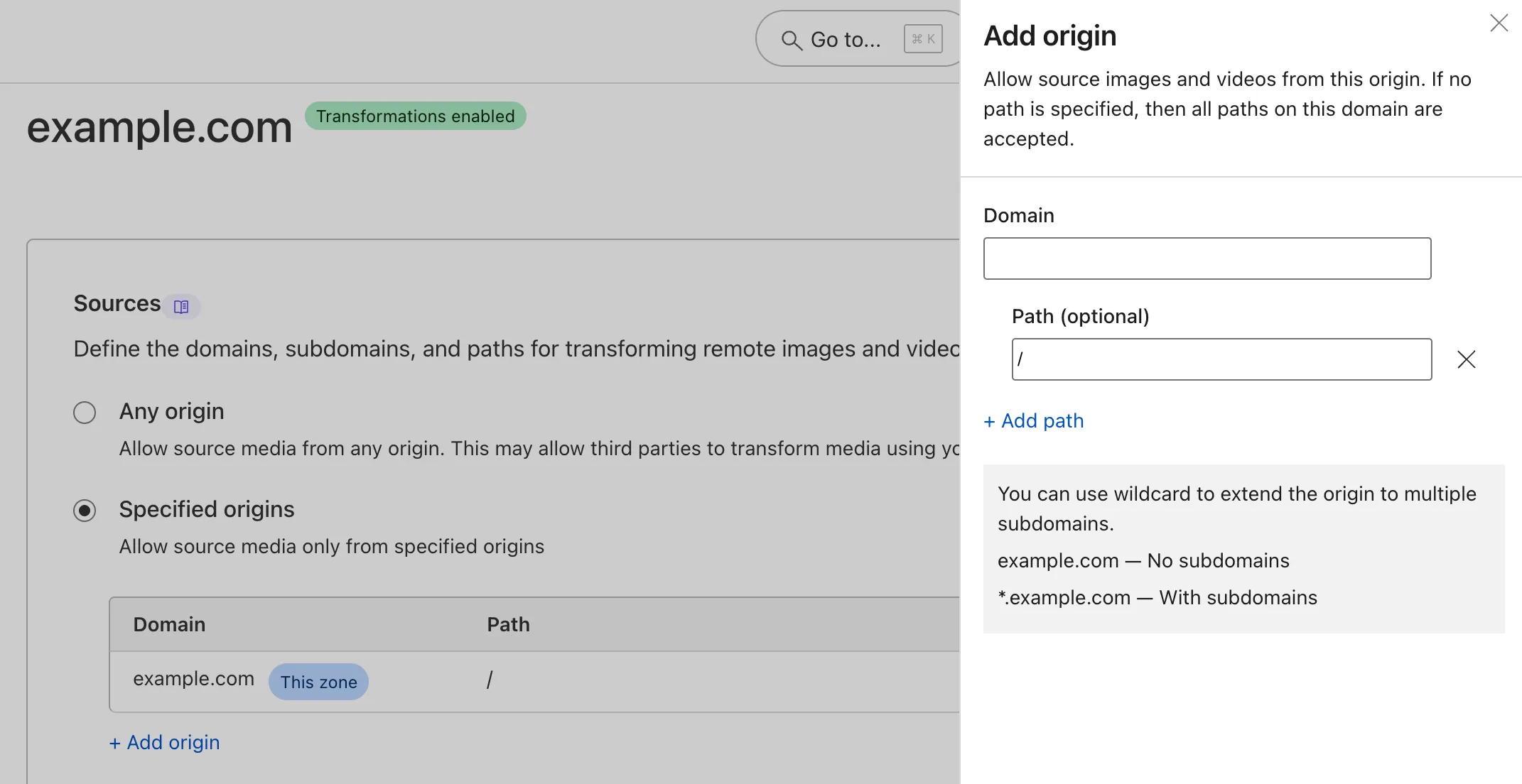

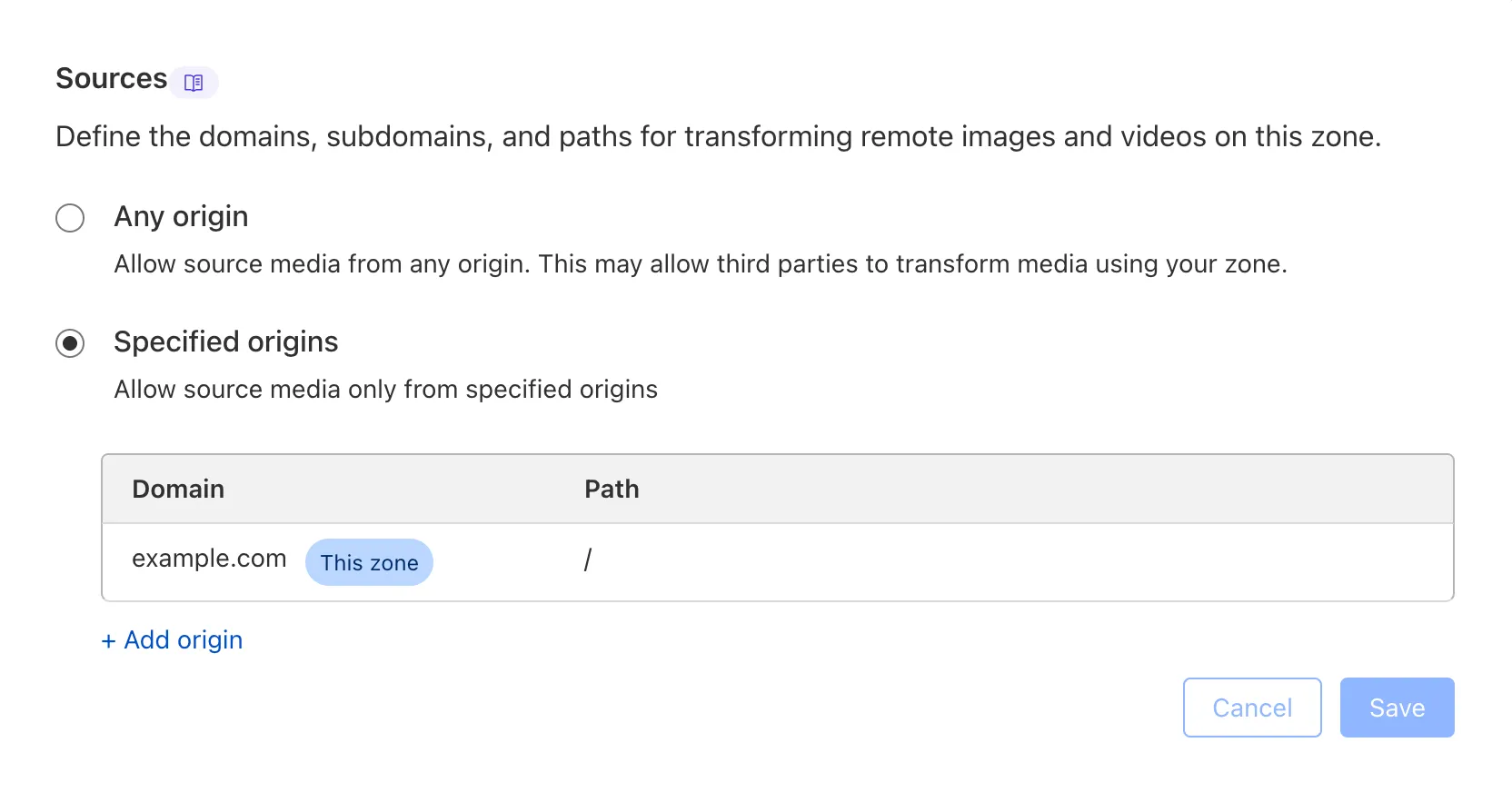

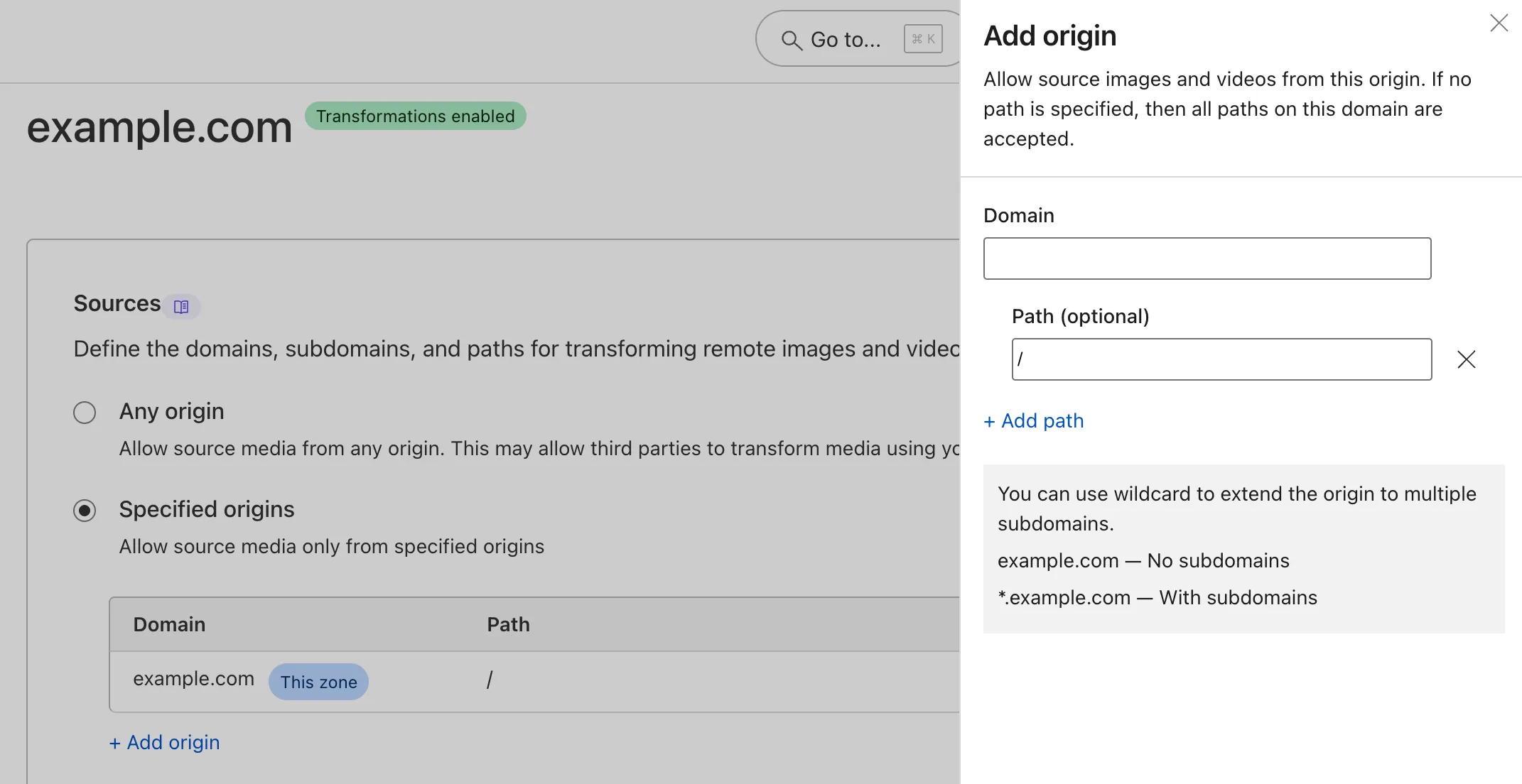

To transform a video or image, you must [enable transformations](https://developers.cloudflare.com/stream/transform-videos/#getting-started) for your zone. If your zone already has Image Transformations enabled, you can also optimize videos with Media Transformations.

## Getting started

You can dynamically optimize and generate still images from videos that are stored *outside* of Cloudflare Stream with Media Transformations.

Cloudflare will automatically cache every transformed video or image on our global network so that you store only the original image at your origin.

To enable transformations on your zone:

1. In the Cloudflare dashboard, go to the **Transformations** page.

[Go to **Transformations**](https://dash.cloudflare.com/?to=/:account/stream/video-transformations)

2. Locate the specific zone where you want to enable transformations.

3. Select **Enable** for the zone.

## Transform a video by URL

You can convert and resize videos by requesting them via a specially-formatted URL, without writing any code. The URL format is:

```plaintext

https://example.com/cdn-cgi/media//

```

* `example.com`: Your website or zone on Cloudflare, with Transformations enabled.

* `/cdn-cgi/media/`: A prefix that identifies a special path handled by Cloudflare's built-in media transformation service.

* ``: A comma-separated list of options. Refer to the available options below.

* ``: A full URL (starting with `https://` or `http://`) of the original asset to resize.

For example, this URL will source an HD video from an R2 bucket, shorten it, crop and resize it as a square, and remove the audio.

```plaintext

https://example.com/cdn-cgi/media/mode=video,time=5s,duration=5s,width=500,height=500,fit=crop,audio=false/https://pub-8613b7f94d6146408add8fefb52c52e8.r2.dev/aus-mobile-demo.mp4

```

The result is an MP4 that can be used in an HTML video element without a player library.

## Options

### `mode`

Specifies the kind of output to generate.

* `video`: Outputs an H.264/AAC optimized MP4 file.

* `frame`: Outputs a still image.

* `spritesheet`: Outputs a JPEG with multiple frames.

* `audio`: Outputs an AAC encoded M4A file.

### `time`

Specifies when to start extracting the output in the input file. Depends on `mode`:

* When `mode` is `spritesheet`, `video`, or `audio`, specifies the timestamp where the output will start.

* When `mode` is `frame`, specifies the timestamp from which to extract the still image.

* Formats as a time string, for example: 5s, 2m

* Acceptable range: 0 – 10m

* Default: 0

### `duration`

The duration of the output video or spritesheet. Depends on `mode`:

* When `mode` is `video` or `audio`, specifies the duration of the output.

* When `mode` is `spritesheet`, specifies the time range from which to select frames.

* Acceptable range: 1s - 60s (or 1m)

* Default: input duration or 60 seconds, whichever is shorter

### `fit`

In combination with `width` and `height`, specifies how to resize and crop the output. If the output is resized, it will always resize proportionally so content is not stretched.

* `contain`: Respecting aspect ratio, scales a video up or down to be entirely contained within output dimensions.

* `scale-down`: Same as contain, but downscales to fit only. Do not upscale.

* `cover`: Respecting aspect ratio, scales a video up or down to entirely cover the output dimensions, with a center-weighted crop of the remainder.

### `height`

Specifies maximum height of the output in pixels. Exact behavior depends on `fit`.

* Acceptable range: 10-2000 pixels

### `width`

Specifies the maximum width of the image in pixels. Exact behavior depends on `fit`.

* Acceptable range: 10-2000 pixels

### `audio`

When `mode` is `video`, specifies whether or not to include the source audio in the output.

* `true`: Includes source audio.

* `false`: Output will be silent.

* Default: `true`

When `mode` is `audio`, audio cannot be false.

### `format`

If `mode` is `frame`, specifies the image output format.

* Acceptable options: `jpg`, `png`

If `mode` is `audio`, specifies the audio output format.

* Acceptable options: `m4a` (default)

### `filename`

Specifies the filename to use in the returned Content-Disposition header. If not specified, the filename will be derived from the source URL.

* Acceptable values:

* Maximum of 120 characters in length.

* Can only contain lowercase letters (a-z), numbers (0-9), hyphens (-), underscores (\_), and an optional extension. A valid name satisfies this regular expression: `^[a-zA-Z0-9-_]+.?[a-zA-Z0-9-_]+$`.

* Examples: `default.mp4`, `shortened-clip_5s`

## Source video requirements

* Input video must be less than 100MB.

* Input video should be an MP4 with H.264 encoded video and AAC or MP3 encoded audio. Other formats may work but are untested.

* Origin must support either HTTP HEAD and range requests, and must return a Content-Range header.

## Limitations

* Maximum input file size is 100 MB. Maximum duration of input video is 10 minutes.

* Media Transformations are not compatible with [Bring Your Own IP (BYOIP)](https://developers.cloudflare.com/byoip/).

* Input video should be an MP4 with H.264 encoded video and AAC or MP3 encoded audio, or animated GIF. Other formats may work but are untested.

## Pricing

After November 1st, 2025, Media Transformations and Image Transformations will use the same subscriptions and usage metrics.

* Generating a still frame (single image) from a video counts as 1 transformation.

* Generating an optimized video or extracting audio counts as 1 transformation *per second of the output* content.

* Each unique transformation, as determined by input and unique combination of flags, is only billed once per calendar month.

* All Media and Image Transformations cost $0.50 per 1,000 monthly unique transformation operations, with a free monthly allocation of 5,000.

---

title: Upload videos · Cloudflare Stream docs

description: Before you upload your video, review the options for uploading a

video, supported formats, and recommendations.

lastUpdated: 2026-03-06T12:19:54.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/stream/uploading-videos/

md: https://developers.cloudflare.com/stream/uploading-videos/index.md

---

Before you upload your video, review the options for uploading a video, supported formats, and recommendations.

## Upload options

| Upload method | When to use |

| - | - |

| [Stream Dashboard](https://dash.cloudflare.com/?to=/:account/stream) | Upload videos from the Stream Dashboard without writing any code. |

| [Upload with a link](https://developers.cloudflare.com/stream/uploading-videos/upload-via-link/) | Upload videos using a link, such as an S3 bucket or content management system. |

| [Upload video file](https://developers.cloudflare.com/stream/uploading-videos/upload-video-file/) | Upload videos stored on a computer. |

| [Direct creator uploads](https://developers.cloudflare.com/stream/uploading-videos/direct-creator-uploads/) | Allows end users of your website or app to upload videos directly to Cloudflare Stream. |

## Supported video formats

Note

Files must be less than 30 GB, and content should be encoded and uploaded in the same frame rate it was recorded.

* MP4

* MKV

* MOV

* AVI

* FLV

* MPEG-2 TS

* MPEG-2 PS

* MXF

* LXF

* GXF

* 3GP

* WebM

* MPG

* Quicktime

## Recommendations for on-demand videos

* Optional but ideal settings:

* MP4 containers

* AAC audio codec

* H264 video codec

* 60 or fewer frames per second

* Closed GOP (*Only required for live streaming.*)

* Mono or Stereo audio. Stream will mix audio tracks with more than two channels down to stereo.

## Frame rates

Stream accepts video uploads at any frame rate. During encoding, Stream re-encodes videos for a maximum of 70 FPS playback. If the original video has a frame rate lower than 70 FPS, Stream re-encodes at the original frame rate.

For variable frame rate content, Stream drops extra frames. For example, if there is more than one frame within a 1/30 second window, Stream drops the extra frames within that period.

---

title: Play video · Cloudflare Stream docs

lastUpdated: 2024-08-30T13:02:26.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/stream/viewing-videos/

md: https://developers.cloudflare.com/stream/viewing-videos/index.md

---

* [Use your own player](https://developers.cloudflare.com/stream/viewing-videos/using-own-player/)

* [Use the Stream Player](https://developers.cloudflare.com/stream/viewing-videos/using-the-stream-player/)

* [Secure your Stream](https://developers.cloudflare.com/stream/viewing-videos/securing-your-stream/)

* [Display thumbnails](https://developers.cloudflare.com/stream/viewing-videos/displaying-thumbnails/)

* [Download video or audio](https://developers.cloudflare.com/stream/viewing-videos/download-videos/)

---

title: WebRTC · Cloudflare Stream docs

description: Sub-second latency live streaming (using WHIP) and playback (using

WHEP) to unlimited concurrent viewers.

lastUpdated: 2026-03-02T15:59:53.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/stream/webrtc-beta/

md: https://developers.cloudflare.com/stream/webrtc-beta/index.md

---

Sub-second latency live streaming (using WHIP) and playback (using WHEP) to unlimited concurrent viewers.

WebRTC is ideal for when you need live video to playback in near real-time, such as:

* When the outcome of a live event is time-sensitive (live sports, financial news)

* When viewers interact with the live stream (live Q\&A, auctions, etc.)

* When you want your end users to be able to easily go live or create their own video content, from a web browser or native app

Note

WebRTC streaming is currently in beta, and we'd love to hear what you think. Join the Cloudflare Discord server [using this invite](https://discord.com/invite/cloudflaredev/) and hop into our [Discord channel](https://discord.com/channels/595317990191398933/893253103695065128) to let us know what you're building with WebRTC!

## Step 1: Create a live input

Create a live input using one of the two options:

* Use the **Live inputs** page of the Cloudflare dashboard.

[Go to **Live inputs**](https://dash.cloudflare.com/?to=/:account/stream/inputs)

* Make a POST request to the [`/live_inputs` API endpoint](https://developers.cloudflare.com/api/resources/stream/subresources/live_inputs/methods/create/)

```json

{

"uid": "1a553f11a88915d093d45eda660d2f8c",

...

"webRTC": {

"url": "https://customer-.cloudflarestream.com//webRTC/publish"

},

"webRTCPlayback": {

"url": "https://customer-.cloudflarestream.com//webRTC/play"

},

...

}

```

## Step 2: Go live using WHIP

Every live input has a unique URL that one creator can be stream to. This URL should *only* be shared with the creator — anyone with this URL has the ability to stream live video to this live input.

Copy the URL from either:

* The **Live inputs** page of the Cloudflare dashboard.

[Go to **Live inputs**](https://dash.cloudflare.com/?to=/:account/stream/inputs)

* The `webRTC` key in the API response (see above).

Paste this URL into the example code.

```javascript

// Add a

---

title: 404 - Page Not Found · Cloudflare Vectorize docs

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/vectorize/404/

md: https://developers.cloudflare.com/vectorize/404/index.md

---

# 404

Check the URL, try using our [search](https://developers.cloudflare.com/search/) or try our LLM-friendly [llms.txt directory](https://developers.cloudflare.com/llms.txt).

---

title: Best practices · Cloudflare Vectorize docs

lastUpdated: 2025-02-21T09:48:48.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/vectorize/best-practices/

md: https://developers.cloudflare.com/vectorize/best-practices/index.md

---

* [Create indexes](https://developers.cloudflare.com/vectorize/best-practices/create-indexes/)

* [Insert vectors](https://developers.cloudflare.com/vectorize/best-practices/insert-vectors/)

* [List vectors](https://developers.cloudflare.com/vectorize/best-practices/list-vectors/)

* [Query vectors](https://developers.cloudflare.com/vectorize/best-practices/query-vectors/)

---

title: Architectures · Cloudflare Vectorize docs

description: Learn how you can use Vectorize within your existing architecture.

lastUpdated: 2025-10-13T13:40:40.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/vectorize/demos/

md: https://developers.cloudflare.com/vectorize/demos/index.md

---

Learn how you can use Vectorize within your existing architecture.

## Reference architectures

Explore the following reference architectures that use Vectorize:

[Fullstack applications](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/fullstack-application/)

[A practical example of how these services come together in a real fullstack application architecture.](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/fullstack-application/)

[Ingesting BigQuery Data into Workers AI](https://developers.cloudflare.com/reference-architecture/diagrams/ai/bigquery-workers-ai/)

[You can connect a Cloudflare Worker to get data from Google BigQuery and pass it to Workers AI, to run AI Models, powered by serverless GPUs.](https://developers.cloudflare.com/reference-architecture/diagrams/ai/bigquery-workers-ai/)

[Composable AI architecture](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-composable/)

[The architecture diagram illustrates how AI applications can be built end-to-end on Cloudflare, or single services can be integrated with external infrastructure and services.](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-composable/)

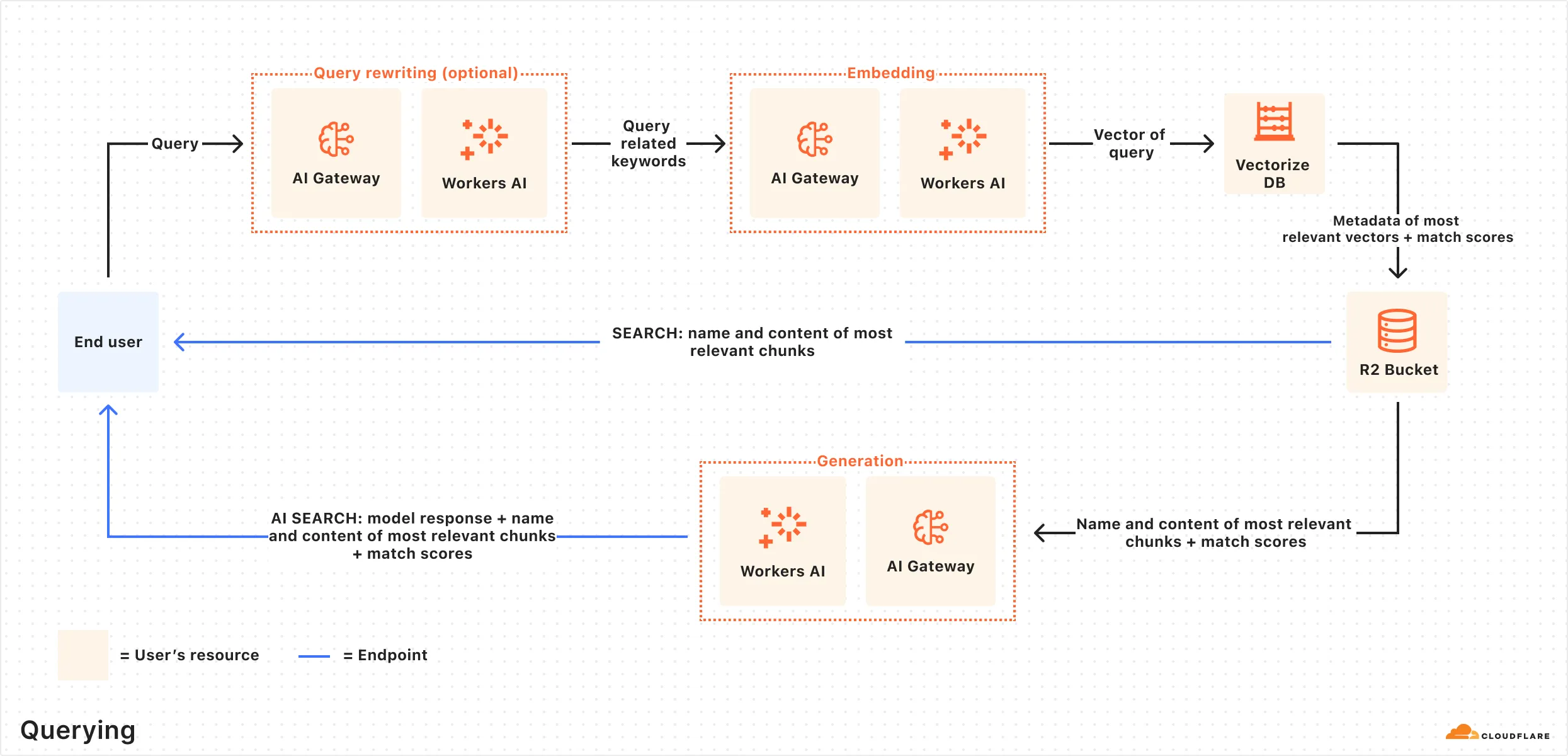

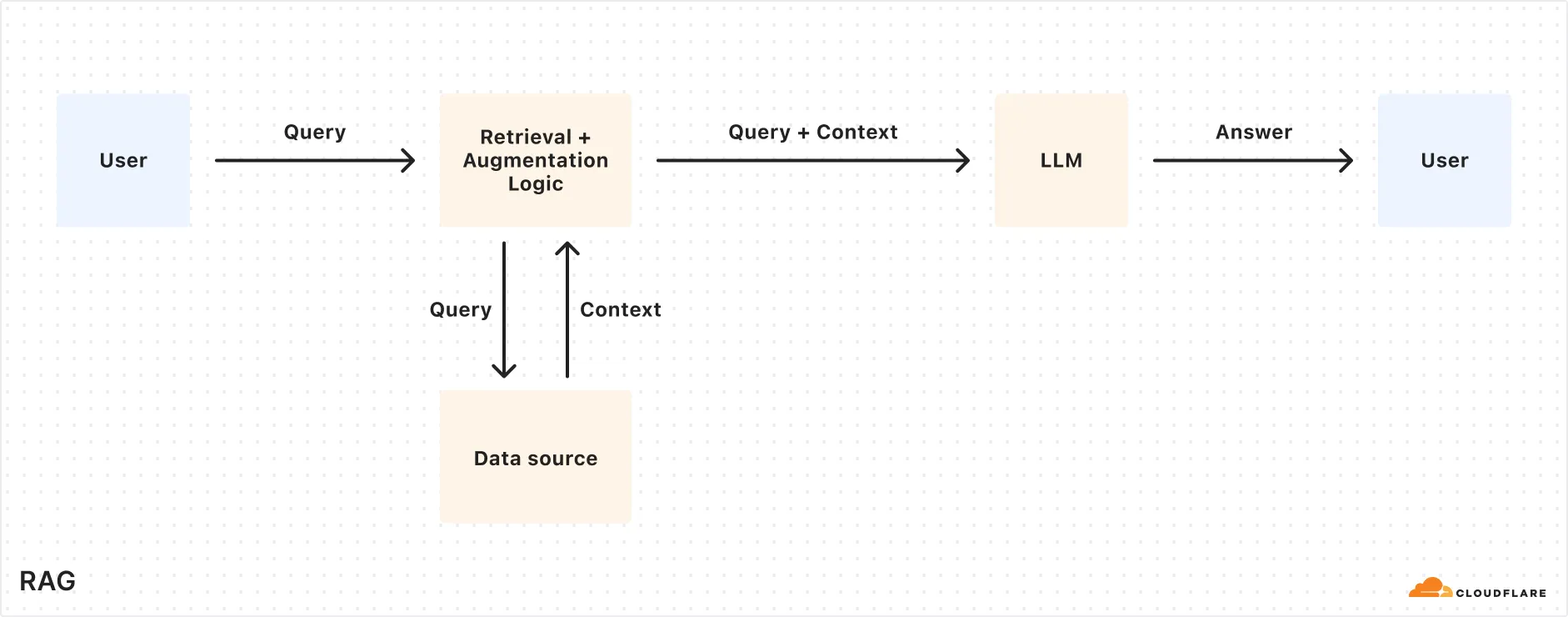

[Retrieval Augmented Generation (RAG)](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-rag/)

[RAG combines retrieval with generative models for better text. It uses external knowledge to create factual, relevant responses, improving coherence and accuracy in NLP tasks like chatbots.](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-rag/)

---

title: Examples · Cloudflare Vectorize docs

description: Explore the following examples for Vectorize.

lastUpdated: 2025-08-18T14:27:42.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/vectorize/examples/

md: https://developers.cloudflare.com/vectorize/examples/index.md

---

Explore the following examples for Vectorize.

* [LangChain Integration](https://js.langchain.com/docs/integrations/vectorstores/cloudflare_vectorize/)

* [Retrieval Augmented Generation](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-rag/)

* [Agents](https://developers.cloudflare.com/agents/)

---

title: Get started · Cloudflare Vectorize docs

lastUpdated: 2025-02-21T09:48:48.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/vectorize/get-started/

md: https://developers.cloudflare.com/vectorize/get-started/index.md

---

* [Introduction to Vectorize](https://developers.cloudflare.com/vectorize/get-started/intro/)

* [Vectorize and Workers AI](https://developers.cloudflare.com/vectorize/get-started/embeddings/)

---

title: Platform · Cloudflare Vectorize docs

lastUpdated: 2025-02-21T09:48:48.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/vectorize/platform/

md: https://developers.cloudflare.com/vectorize/platform/index.md

---

* [Pricing](https://developers.cloudflare.com/vectorize/platform/pricing/)

* [Limits](https://developers.cloudflare.com/vectorize/platform/limits/)

* [Choose a data or storage product](https://developers.cloudflare.com/workers/platform/storage-options/)

* [Changelog](https://developers.cloudflare.com/vectorize/platform/changelog/)

* [Event subscriptions](https://developers.cloudflare.com/vectorize/platform/event-subscriptions/)

---

title: Reference · Cloudflare Vectorize docs

lastUpdated: 2025-02-21T09:48:48.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/vectorize/reference/

md: https://developers.cloudflare.com/vectorize/reference/index.md

---

* [Vector databases](https://developers.cloudflare.com/vectorize/reference/what-is-a-vector-database/)

* [Vectorize API](https://developers.cloudflare.com/vectorize/reference/client-api/)

* [Metadata filtering](https://developers.cloudflare.com/vectorize/reference/metadata-filtering/)

* [Transition legacy Vectorize indexes](https://developers.cloudflare.com/vectorize/reference/transition-vectorize-legacy/)

* [Wrangler commands](https://developers.cloudflare.com/vectorize/reference/wrangler-commands/)

---

title: Tutorials · Cloudflare Vectorize docs

description: View tutorials to help you get started with Vectorize.

lastUpdated: 2025-08-18T14:27:42.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/vectorize/tutorials/

md: https://developers.cloudflare.com/vectorize/tutorials/index.md

---

View tutorials to help you get started with Vectorize.

## Docs

| Name | Last Updated | Difficulty |

| - | - | - |

| [Build a Retrieval Augmented Generation (RAG) AI](https://developers.cloudflare.com/workers-ai/guides/tutorials/build-a-retrieval-augmented-generation-ai/) | over 1 year ago | Beginner |

## Videos

Welcome to the Cloudflare Developer Channel

Welcome to the Cloudflare Developers YouTube channel. We've got tutorials and working demos and everything you need to level up your projects. Whether you're working on your next big thing or just dorking around with some side projects, we've got you covered! So why don't you come hang out, subscribe to our developer channel and together we'll build something awesome. You're gonna love it.

Use Vectorize to add additional context to your AI Applications through RAG

A RAG based AI Chat app that uses Vectorize to access video game data for employees of Gamertown.

Learn AI Development (models, embeddings, vectors)

In this workshop, Kristian Freeman, Cloudflare Developer Advocate, teaches the basics of AI Development - models, embeddings, and vectors (including vector databases).

---

title: Vectorize REST API · Cloudflare Vectorize docs

lastUpdated: 2024-12-16T22:33:26.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/vectorize/vectorize-api/

md: https://developers.cloudflare.com/vectorize/vectorize-api/index.md

---

---

title: 404 - Page Not Found · Cloudflare Workers docs

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/workers/404/

md: https://developers.cloudflare.com/workers/404/index.md

---

# 404

Check the URL, try using our [search](https://developers.cloudflare.com/search/) or try our LLM-friendly [llms.txt directory](https://developers.cloudflare.com/llms.txt).

---

title: AI Assistant · Cloudflare Workers docs

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/workers/ai/

md: https://developers.cloudflare.com/workers/ai/index.md

---

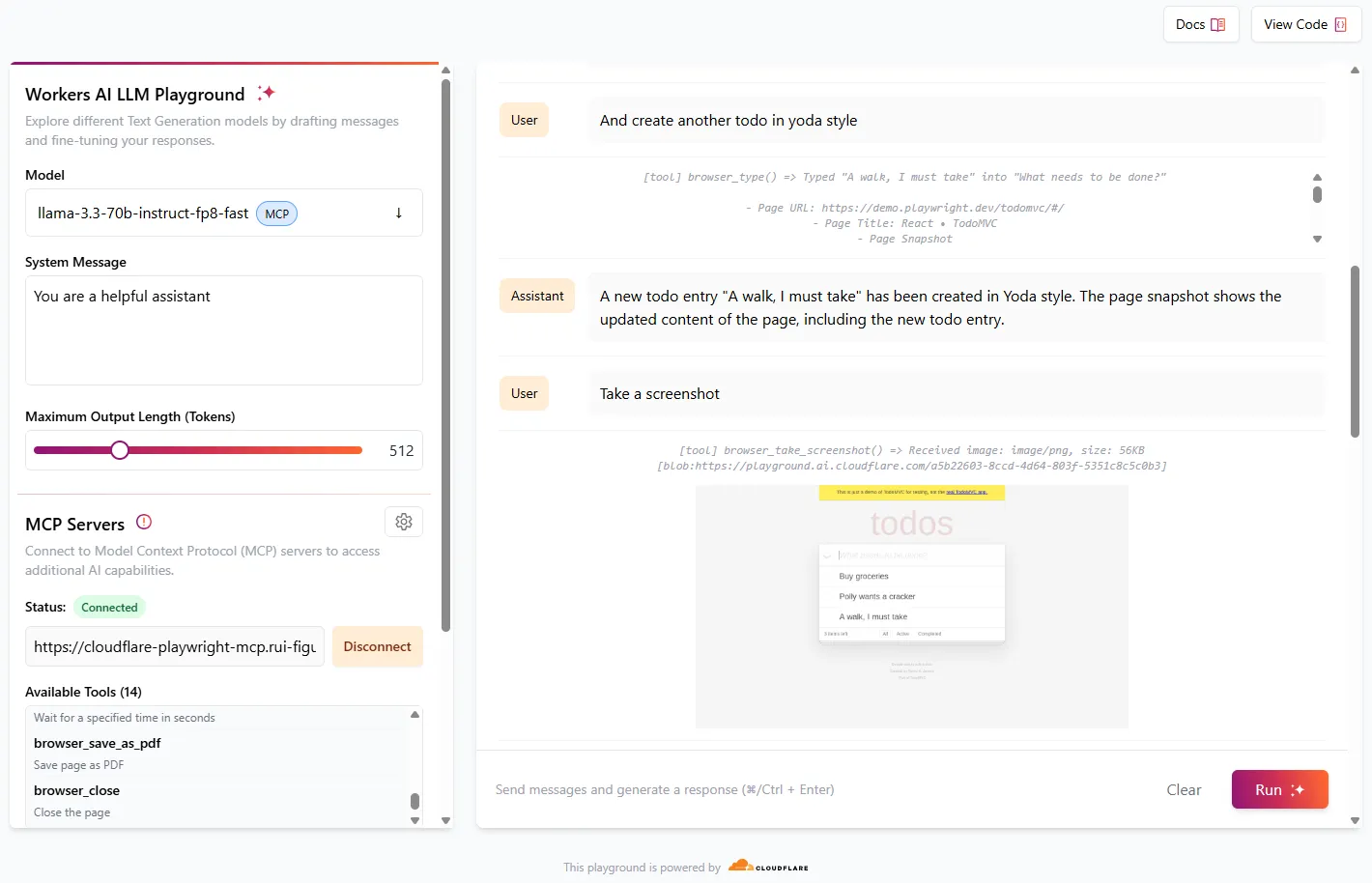

# Meet your AI assistant, CursorAI Preview

Cursor is an experimental AI assistant, trained to answer questions about Cloudflare and powered by [Cloudflare Workers](https://developers.cloudflare.com/workers/), [Workers AI](https://developers.cloudflare.com/workers-ai/), [Vectorize](https://developers.cloudflare.com/vectorize/), and [AI Gateway](https://developers.cloudflare.com/ai-gateway/). Cursor is here to help answer your Cloudflare questions, so ask away!

Cursor is an experimental AI preview, meaning that the answers provided are often incorrect, incomplete, or lacking in context. Be sure to double-check what Cursor recommends using the linked sources provided.

Use of Cloudflare Cursor is subject to the Cloudflare Website and Online Services [Terms of Use](https://www.cloudflare.com/website-terms/). You acknowledge and agree that the output generated by Cursor has not been verified by Cloudflare for accuracy and does not represent Cloudflare’s views.

---

title: Best practices · Cloudflare Workers docs

lastUpdated: 2026-02-12T20:49:08.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/workers/best-practices/

md: https://developers.cloudflare.com/workers/best-practices/index.md

---

* [Workers Best Practices](https://developers.cloudflare.com/workers/best-practices/workers-best-practices/)

---

title: CI/CD · Cloudflare Workers docs

description: Set up continuous integration and continuous deployment for your Workers.

lastUpdated: 2025-02-05T10:06:53.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/workers/ci-cd/

md: https://developers.cloudflare.com/workers/ci-cd/index.md

---

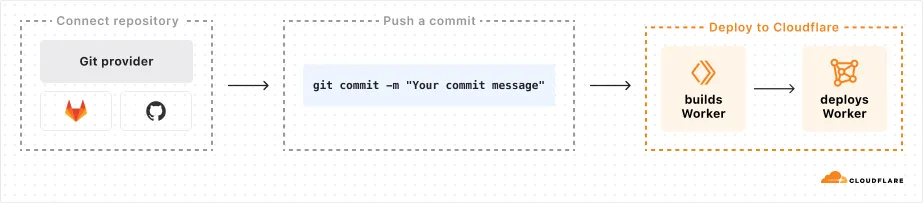

You can set up continuous integration and continuous deployment (CI/CD) for your Workers by using either the integrated build system, [Workers Builds](#workers-builds), or using [external providers](#external-cicd) to optimize your development workflow.

## Why use CI/CD?

Using a CI/CD pipeline to deploy your Workers is a best practice because it:

* Automates the build and deployment process, removing the need for manual `wrangler deploy` commands.

* Ensures consistent builds and deployments across your team by using the same source control management (SCM) system.

* Reduces variability and errors by deploying in a uniform environment.

* Simplifies managing access to production credentials.

## Which CI/CD should I use?

Choose [Workers Builds](https://developers.cloudflare.com/workers/ci-cd/builds) if you want a fully integrated solution within Cloudflare's ecosystem that requires minimal setup and configuration for GitHub or GitLab users.

We recommend using [external CI/CD providers](https://developers.cloudflare.com/workers/ci-cd/external-cicd) if:

* You have a self-hosted instance of GitHub or GitLabs, which is currently not supported in Workers Builds' [Git integration](https://developers.cloudflare.com/workers/ci-cd/builds/git-integration/)

* You are using a Git provider that is not GitHub or GitLab

## Workers Builds

[Workers Builds](https://developers.cloudflare.com/workers/ci-cd/builds) is Cloudflare's native CI/CD system that allows you to integrate with GitHub or GitLab to automatically deploy changes with each new push to a selected branch (e.g. `main`).

Ready to streamline your Workers deployments? Get started with [Workers Builds](https://developers.cloudflare.com/workers/ci-cd/builds/#get-started).

## External CI/CD

You can also choose to set up your CI/CD pipeline with an external provider.

* [GitHub Actions](https://developers.cloudflare.com/workers/ci-cd/external-cicd/github-actions/)

* [GitLab CI/CD](https://developers.cloudflare.com/workers/ci-cd/external-cicd/gitlab-cicd/)

---

title: Configuration · Cloudflare Workers docs

description: Worker configuration is managed through a Wrangler configuration

file, which defines your project settings, bindings, and deployment options.

Wrangler is the command-line tool used to develop, test, and deploy Workers.

lastUpdated: 2026-02-18T14:15:12.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/workers/configuration/

md: https://developers.cloudflare.com/workers/configuration/index.md

---

Worker configuration is managed through a [Wrangler configuration file](https://developers.cloudflare.com/workers/wrangler/configuration/), which defines your project settings, bindings, and deployment options. Wrangler is the command-line tool used to develop, test, and deploy Workers.

For more information on Wrangler, refer to [Wrangler](https://developers.cloudflare.com/workers/wrangler/).

* [Bindings](https://developers.cloudflare.com/workers/runtime-apis/bindings/)

* [Compatibility dates](https://developers.cloudflare.com/workers/configuration/compatibility-dates/)

* [Compatibility flags](https://developers.cloudflare.com/workers/configuration/compatibility-flags/)

* [Cron Triggers](https://developers.cloudflare.com/workers/configuration/cron-triggers/)

* [Environment variables](https://developers.cloudflare.com/workers/configuration/environment-variables/)

* [Integrations](https://developers.cloudflare.com/workers/configuration/integrations/)

* [Multipart upload metadata](https://developers.cloudflare.com/workers/configuration/multipart-upload-metadata/)

* [Page Rules](https://developers.cloudflare.com/workers/configuration/workers-with-page-rules/)

* [Placement](https://developers.cloudflare.com/workers/configuration/placement/)

* [Preview URLs](https://developers.cloudflare.com/workers/configuration/previews/)

* [Routes and domains](https://developers.cloudflare.com/workers/configuration/routing/)

* [Secrets](https://developers.cloudflare.com/workers/configuration/secrets/)

* [Versions & Deployments](https://developers.cloudflare.com/workers/configuration/versions-and-deployments/)

* [Workers Sites](https://developers.cloudflare.com/workers/configuration/sites/)

---

title: Databases · Cloudflare Workers docs

description: Explore database integrations for your Worker projects.

lastUpdated: 2025-02-05T10:06:53.000Z

chatbotDeprioritize: true

source_url:

html: https://developers.cloudflare.com/workers/databases/

md: https://developers.cloudflare.com/workers/databases/index.md

---

Explore database integrations for your Worker projects.

* [Connect to databases](https://developers.cloudflare.com/workers/databases/connecting-to-databases/)

* [Analytics Engine](https://developers.cloudflare.com/analytics/analytics-engine/)

* [Vectorize (vector database)](https://developers.cloudflare.com/vectorize/)

* [Cloudflare D1](https://developers.cloudflare.com/d1/)

* [Hyperdrive](https://developers.cloudflare.com/hyperdrive/)

* [3rd Party Integrations](https://developers.cloudflare.com/workers/databases/third-party-integrations/)

---

title: Demos and architectures · Cloudflare Workers docs

description: Learn how you can use Workers within your existing application and

architecture.

lastUpdated: 2025-10-13T13:40:40.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/workers/demos/

md: https://developers.cloudflare.com/workers/demos/index.md

---

Learn how you can use Workers within your existing application and architecture.

## Demos

Explore the following demo applications for Workers.

* [Starter code for D1 Sessions API:](https://github.com/cloudflare/templates/tree/main/d1-starter-sessions-api-template) An introduction to D1 Sessions API. This demo simulates purchase orders administration.

* [JavaScript-native RPC on Cloudflare Workers <> Named Entrypoints:](https://github.com/cloudflare/js-rpc-and-entrypoints-demo) This is a collection of examples of communicating between multiple Cloudflare Workers using the remote-procedure call (RPC) system that is built into the Workers runtime.

* [Workers for Platforms Example Project:](https://github.com/cloudflare/workers-for-platforms-example) Explore how you could manage thousands of Workers with a single Cloudflare Workers account.

* [Cloudflare Workers Chat Demo:](https://github.com/cloudflare/workers-chat-demo) This is a demo app written on Cloudflare Workers utilizing Durable Objects to implement real-time chat with stored history.

* [Turnstile Demo:](https://github.com/cloudflare/turnstile-demo-workers) A simple demo with a Turnstile-protected form, using Cloudflare Workers. With the code in this repository, we demonstrate implicit rendering and explicit rendering.

* [Wildebeest:](https://github.com/cloudflare/wildebeest) Wildebeest is an ActivityPub and Mastodon-compatible server whose goal is to allow anyone to operate their Fediverse server and identity on their domain without needing to keep infrastructure, with minimal setup and maintenance, and running in minutes.

* [D1 Northwind Demo:](https://github.com/cloudflare/d1-northwind) This is a demo of the Northwind dataset, running on Cloudflare Workers, and D1 - Cloudflare's SQL database, running on SQLite.

* [Multiplayer Doom Workers:](https://github.com/cloudflare/doom-workers) A WebAssembly Doom port with multiplayer support running on top of Cloudflare's global network using Workers, WebSockets, Pages, and Durable Objects.

* [Queues Web Crawler:](https://github.com/cloudflare/queues-web-crawler) An example use-case for Queues, a web crawler built on Browser Rendering and Puppeteer. The crawler finds the number of links to Cloudflare.com on the site, and archives a screenshot to Workers KV.

* [DMARC Email Worker:](https://github.com/cloudflare/dmarc-email-worker) A Cloudflare worker script to process incoming DMARC reports, store them, and produce analytics.

* [Access External Auth Rule Example Worker:](https://github.com/cloudflare/workers-access-external-auth-example) This is a worker that allows you to quickly setup an external evalutation rule in Cloudflare Access.

## Reference architectures

Explore the following reference architectures that use Workers:

[Fullstack applications](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/fullstack-application/)

[A practical example of how these services come together in a real fullstack application architecture.](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/fullstack-application/)

[Storing user generated content](https://developers.cloudflare.com/reference-architecture/diagrams/storage/storing-user-generated-content/)

[Store user-generated content in R2 for fast, secure, and cost-effective architecture.](https://developers.cloudflare.com/reference-architecture/diagrams/storage/storing-user-generated-content/)

[Optimizing and securing connected transportation systems](https://developers.cloudflare.com/reference-architecture/diagrams/iot/optimizing-and-securing-connected-transportation-systems/)

[This diagram showcases Cloudflare components optimizing connected transportation systems. It illustrates how their technologies minimize latency, ensure reliability, and strengthen security for critical data flow.](https://developers.cloudflare.com/reference-architecture/diagrams/iot/optimizing-and-securing-connected-transportation-systems/)

[Ingesting BigQuery Data into Workers AI](https://developers.cloudflare.com/reference-architecture/diagrams/ai/bigquery-workers-ai/)

[You can connect a Cloudflare Worker to get data from Google BigQuery and pass it to Workers AI, to run AI Models, powered by serverless GPUs.](https://developers.cloudflare.com/reference-architecture/diagrams/ai/bigquery-workers-ai/)

[Event notifications for storage](https://developers.cloudflare.com/reference-architecture/diagrams/storage/event-notifications-for-storage/)

[Use Cloudflare Workers or an external service to monitor for notifications about data changes and then handle them appropriately.](https://developers.cloudflare.com/reference-architecture/diagrams/storage/event-notifications-for-storage/)

[Extend ZTNA with external authorization and serverless computing](https://developers.cloudflare.com/reference-architecture/diagrams/sase/augment-access-with-serverless/)

[Cloudflare's ZTNA enhances access policies using external API calls and Workers for robust security. It verifies user authentication and authorization, ensuring only legitimate access to protected resources.](https://developers.cloudflare.com/reference-architecture/diagrams/sase/augment-access-with-serverless/)

[Cloudflare Security Architecture](https://developers.cloudflare.com/reference-architecture/architectures/security/)

[This document provides insight into how this network and platform are architected from a security perspective, how they are operated, and what services are available for businesses to address their own security challenges.](https://developers.cloudflare.com/reference-architecture/architectures/security/)

[Composable AI architecture](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-composable/)

[The architecture diagram illustrates how AI applications can be built end-to-end on Cloudflare, or single services can be integrated with external infrastructure and services.](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-composable/)

[A/B-testing using Workers](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/a-b-testing-using-workers/)

[Cloudflare's low-latency, fully serverless compute platform, Workers offers powerful capabilities to enable A/B testing using a server-side implementation.](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/a-b-testing-using-workers/)

[Serverless global APIs](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/serverless-global-apis/)

[An example architecture of a serverless API on Cloudflare and aims to illustrate how different compute and data products could interact with each other.](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/serverless-global-apis/)

[Serverless ETL pipelines](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/serverless-etl/)

[Cloudflare enables fully serverless ETL pipelines, significantly reducing complexity, accelerating time to production, and lowering overall costs.](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/serverless-etl/)

[Egress-free object storage in multi-cloud setups](https://developers.cloudflare.com/reference-architecture/diagrams/storage/egress-free-storage-multi-cloud/)

[Learn how to use R2 to get egress-free object storage in multi-cloud setups.](https://developers.cloudflare.com/reference-architecture/diagrams/storage/egress-free-storage-multi-cloud/)

[Retrieval Augmented Generation (RAG)](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-rag/)

[RAG combines retrieval with generative models for better text. It uses external knowledge to create factual, relevant responses, improving coherence and accuracy in NLP tasks like chatbots.](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-rag/)

[Automatic captioning for video uploads](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-video-caption/)

[By integrating automatic speech recognition technology into video platforms, content creators, publishers, and distributors can reach a broader audience, including individuals with hearing impairments or those who prefer to consume content in different languages.](https://developers.cloudflare.com/reference-architecture/diagrams/ai/ai-video-caption/)

[Serverless image content management](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/serverless-image-content-management/)

[Leverage various components of Cloudflare's ecosystem to construct a scalable image management solution](https://developers.cloudflare.com/reference-architecture/diagrams/serverless/serverless-image-content-management/)

---

title: Development & testing · Cloudflare Workers docs

description: Develop and test your Workers locally.

lastUpdated: 2025-12-29T17:29:32.000Z

chatbotDeprioritize: false

source_url:

html: https://developers.cloudflare.com/workers/development-testing/

md: https://developers.cloudflare.com/workers/development-testing/index.md

---

You can build, run, and test your Worker code on your own local machine before deploying it to Cloudflare's network. This is made possible through [Miniflare](https://developers.cloudflare.com/workers/testing/miniflare/), a simulator that executes your Worker code using the same runtime used in production, [`workerd`](https://github.com/cloudflare/workerd).

[By default](https://developers.cloudflare.com/workers/development-testing/#defaults), your Worker's bindings [connect to locally simulated resources](https://developers.cloudflare.com/workers/development-testing/#bindings-during-local-development), but can be configured to interact with the real, production resource with [remote bindings](https://developers.cloudflare.com/workers/development-testing/#remote-bindings).

## Core concepts

### Worker execution vs Bindings

When developing Workers, it's important to understand two distinct concepts:

* **Worker execution**: Where your Worker code actually runs (on your local machine vs on Cloudflare's infrastructure).

* [**Bindings**](https://developers.cloudflare.com/workers/runtime-apis/bindings/): How your Worker interacts with Cloudflare resources (like [KV namespaces](https://developers.cloudflare.com/kv), [R2 buckets](https://developers.cloudflare.com/r2), [D1 databases](https://developers.cloudflare.com/d1), [Queues](https://developers.cloudflare.com/queues/), [Durable Objects](https://developers.cloudflare.com/durable-objects/), etc). In your Worker code, these are accessed via the `env` object (such as `env.MY_KV`).

## Local development

**You can start a local development server using:**

1. The Cloudflare Workers CLI [**Wrangler**](https://developers.cloudflare.com/workers/wrangler/), using the built-in [`wrangler dev`](https://developers.cloudflare.com/workers/wrangler/commands/#dev) command.

* npm

```sh

npx wrangler dev

```

* yarn

```sh

yarn wrangler dev

```

* pnpm

```sh

pnpm wrangler dev

```

1. [**Vite**](https://vite.dev/), using the [**Cloudflare Vite plugin**](https://developers.cloudflare.com/workers/vite-plugin/).

* npm

```sh

npx vite dev

```

* yarn

```sh

yarn vite dev

```

* pnpm

```sh

pnpm vite dev

```

Both Wrangler and the Cloudflare Vite plugin use [Miniflare](https://developers.cloudflare.com/workers/testing/miniflare/) under the hood, and are developed and maintained by the Cloudflare team. For guidance on choosing when to use Wrangler versus Vite, see our guide [Choosing between Wrangler & Vite](https://developers.cloudflare.com/workers/development-testing/wrangler-vs-vite/).

* [Get started with Wrangler](https://developers.cloudflare.com/workers/wrangler/install-and-update/)

* [Get started with the Cloudflare Vite plugin](https://developers.cloudflare.com/workers/vite-plugin/get-started/)

### Defaults

By default, running `wrangler dev` / `vite dev` (when using the [Vite plugin](https://developers.cloudflare.com/workers/vite-plugin/get-started/)) means that:

* Your Worker code runs on your local machine.

* All resources your Worker is bound to in your [Wrangler configuration](https://developers.cloudflare.com/workers/wrangler/configuration/) are simulated locally.

### Bindings during local development

[Bindings](https://developers.cloudflare.com/workers/runtime-apis/bindings/) are interfaces that allow your Worker to interact with various Cloudflare resources (like [KV namespaces](https://developers.cloudflare.com/kv), [R2 buckets](https://developers.cloudflare.com/r2), [D1 databases](https://developers.cloudflare.com/d1), [Queues](https://developers.cloudflare.com/queues/), [Durable Objects](https://developers.cloudflare.com/durable-objects/), etc). In your Worker code, these are accessed via the `env` object (such as `env.MY_KV`).

During local development, your Worker code interacts with these bindings using the exact same API calls (such as `env.MY_KV.put()`) as it would in a deployed environment. These local resources are initially empty, but you can populate them with data, as documented in [Adding local data](https://developers.cloudflare.com/workers/development-testing/local-data/).

* By default, bindings connect to **local resource simulations** (except for [AI bindings](https://developers.cloudflare.com/workers-ai/configuration/bindings/), as AI models always run remotely).

* You can override this default behavior and **connect to the remote resource** on a per-binding basis with [remote bindings](https://developers.cloudflare.com/workers/development-testing/#remote-bindings). This lets you connect to real, production resources while still running your Worker code locally.

* When using `wrangler dev`, you can temporarily disable all [remote bindings](https://developers.cloudflare.com/workers/development-testing/#remote-bindings) (and connect only to local resources) by providing the `--local` flag (i.e. `wrangler dev --local`)

## Remote bindings

**Remote bindings** are bindings that are configured to connect to the deployed, remote resource during local development *instead* of the locally simulated resource. Remote bindings are supported by [**Wrangler**](https://developers.cloudflare.com/workers/wrangler/), the [**Cloudflare Vite plugin**](https://developers.cloudflare.com/workers/vite-plugin/), and the `@cloudflare/vitest-pool-workers` package. You can configure remote bindings by setting `remote: true` in the binding definition.

### Example configuration

* wrangler.jsonc

```jsonc

{

"name": "my-worker",

// Set this to today's date

"compatibility_date": "2026-03-09",

"r2_buckets": [

{

"bucket_name": "screenshots-bucket",

"binding": "screenshots_bucket",

"remote": true,

},

],

}

```

* wrangler.toml

```toml

name = "my-worker"

# Set this to today's date

compatibility_date = "2026-03-09"

[[r2_buckets]]

bucket_name = "screenshots-bucket"

binding = "screenshots_bucket"

remote = true

```

When remote bindings are configured, your Worker still **executes locally**, only the underlying resources your bindings connect to change. For all bindings marked with `remote: true`, Miniflare will route its operations (such as `env.MY_KV.put()`) to the deployed resource. All other bindings not explicitly configured with `remote: true` continue to use their default local simulations.

### Integration with environments

Remote Bindings work well together with [Workers Environments](https://developers.cloudflare.com/workers/wrangler/environments). To protect production data, you can create a development or staging environment and specify different resources in your [Wrangler configuration](https://developers.cloudflare.com/workers/wrangler/configuration/) than you would use for production.

**For example:**

* wrangler.jsonc

```jsonc

{

"name": "my-worker",

// Set this to today's date

"compatibility_date": "2026-03-09",

"env": {

"production": {

"r2_buckets": [

{

"bucket_name": "screenshots-bucket",

"binding": "screenshots_bucket",

},

],

},

"staging": {

"r2_buckets": [

{

"bucket_name": "preview-screenshots-bucket",

"binding": "screenshots_bucket",

"remote": true,

},

],

},

},

}

```

* wrangler.toml

```toml

name = "my-worker"

# Set this to today's date

compatibility_date = "2026-03-09"

[[env.production.r2_buckets]]

bucket_name = "screenshots-bucket"

binding = "screenshots_bucket"

[[env.staging.r2_buckets]]

bucket_name = "preview-screenshots-bucket"

binding = "screenshots_bucket"

remote = true

```

Running `wrangler dev -e staging` (or `CLOUDFLARE_ENV=staging vite dev`) with the above configuration means that:

* Your Worker code runs locally

* All calls made to `env.screenshots_bucket` will use the `preview-screenshots-bucket` resource, rather than the production `screenshots-bucket`.

### Recommended remote bindings

We recommend configuring specific bindings to connect to their remote counterparts. These services often rely on Cloudflare's network infrastructure or have complex backends that are not fully simulated locally.

The following bindings are recommended to have `remote: true` in your Wrangler configuration:

#### [Browser Rendering](https://developers.cloudflare.com/workers/wrangler/configuration/#browser-rendering):

To interact with a real headless browser for rendering. There is no current local simulation for Browser Rendering.

* wrangler.jsonc

```jsonc

{

"browser": {

"binding": "MY_BROWSER",

"remote": true

},

}

```

* wrangler.toml

```toml

[browser]

binding = "MY_BROWSER"

remote = true

```

#### [Workers AI](https://developers.cloudflare.com/workers/wrangler/configuration/#workers-ai):

To utilize actual AI models deployed on Cloudflare's network for inference. There is no current local simulation for Workers AI.

* wrangler.jsonc

```jsonc

{

"ai": {

"binding": "AI",

"remote": true

},

}

```

* wrangler.toml

```toml

[ai]

binding = "AI"

remote = true

```

#### [Vectorize](https://developers.cloudflare.com/workers/wrangler/configuration/#vectorize-indexes):

To connect to your production Vectorize indexes for accurate vector search and similarity operations. There is no current local simulation for Vectorize.

* wrangler.jsonc

```jsonc

{

"vectorize": [

{

"binding": "MY_VECTORIZE_INDEX",

"index_name": "my-prod-index",

"remote": true

}

],

}

```

* wrangler.toml

```toml

[[vectorize]]

binding = "MY_VECTORIZE_INDEX"

index_name = "my-prod-index"

remote = true

```

#### [mTLS](https://developers.cloudflare.com/workers/wrangler/configuration/#mtls-certificates):

To verify that the certificate exchange and validation process work as expected. There is no current local simulation for mTLS bindings.

* wrangler.jsonc

```jsonc

{

"mtls_certificates": [

{

"binding": "MY_CLIENT_CERT_FETCHER",

"certificate_id": "",

"remote": true

}

]

}

```

* wrangler.toml

```toml

[[mtls_certificates]]

binding = "MY_CLIENT_CERT_FETCHER"

certificate_id = ""

remote = true

```

#### [Images](https://developers.cloudflare.com/workers/wrangler/configuration/#images):

To connect to a high-fidelity version of the Images API, and verify that all transformations work as expected. Local simulation for Cloudflare Images is [limited with only a subset of features](https://developers.cloudflare.com/images/transform-images/bindings/#interact-with-your-images-binding-locally).

* wrangler.jsonc

```jsonc

{

"images": {

"binding": "IMAGES" ,

"remote": true

}

}

```

* wrangler.toml

```toml

[images]

binding = "IMAGES"

remote = true

```

Note

If `remote: true` is not specified for Browser Rendering, Vectorize, mTLS, or Images, Cloudflare **will issue a warning**. This prompts you to consider enabling it for a more production-like testing experience.

If a Workers AI binding has `remote` set to `false`, Cloudflare will **produce an error**. If the property is omitted, Cloudflare will connect to the remote resource and issue a warning to add the property to configuration.

#### [Dispatch Namespaces](https://developers.cloudflare.com/cloudflare-for-platforms/workers-for-platforms/reference/local-development/):

Workers for Platforms users can configure `remote: true` in dispatch namespace binding definitions:

* wrangler.jsonc

```jsonc

{

"dispatch_namespaces": [

{

"binding": "DISPATCH_NAMESPACE",

"namespace": "testing",

"remote":true

}

]

}

```

* wrangler.toml

```toml

[[dispatch_namespaces]]

binding = "DISPATCH_NAMESPACE"

namespace = "testing"

remote = true

```

This allows you to run your [dynamic dispatch Worker](https://developers.cloudflare.com/cloudflare-for-platforms/workers-for-platforms/how-workers-for-platforms-works/#dynamic-dispatch-worker) locally, while connecting it to your remote dispatch namespace binding. This allows you to test changes to your core dispatching logic against real, deployed [user Workers](https://developers.cloudflare.com/cloudflare-for-platforms/workers-for-platforms/how-workers-for-platforms-works/#user-workers).

### Unsupported remote bindings

Certain bindings are not supported for remote connections (i.e. with `remote: true`) during local development. These will always use local simulations or local values.

If `remote: true` is specified in Wrangler configuration for any of the following unsupported binding types, Cloudflare **will issue an error**. See [all supported and unsupported bindings for remote bindings](https://developers.cloudflare.com/workers/development-testing/bindings-per-env/).

* [**Durable Objects**](https://developers.cloudflare.com/workers/wrangler/configuration/#durable-objects): Enabling remote connections for Durable Objects may be supported in the future, but currently will always run locally. However, using Durable Objects in combination with remote bindings is possible. Refer to [Using remote resources with Durable Objects and Workflows](#using-remote-resources-with-durable-objects-and-workflows) below.

* [**Workflows**](https://developers.cloudflare.com/workflows/): Enabling remote connections for Workflows may be supported in the future, but currently will only run locally. However, using Workflows in combination with remote bindings is possible. Refer to [Using remote resources with Durable Objects and Workflows](#using-remote-resources-with-durable-objects-and-workflows) below.

* [**Environment Variables (`vars`)**](https://developers.cloudflare.com/workers/wrangler/configuration/#environment-variables): Environment variables are intended to be distinct between local development and deployed environments. They are easily configurable locally (such as in a `.dev.vars` file or directly in Wrangler configuration).

* [**Secrets**](https://developers.cloudflare.com/workers/wrangler/configuration/#secrets): Like environment variables, secrets are expected to have different values in local development versus deployed environments for security reasons. Use `.dev.vars` for local secret management.

* [**Static Assets**](https://developers.cloudflare.com/workers/wrangler/configuration/#assets) Static assets are always served from your local disk during development for speed and direct feedback on changes.

* [**Version Metadata**](https://developers.cloudflare.com/workers/runtime-apis/bindings/version-metadata/): Since your Worker code is running locally, version metadata (like commit hash, version tags) associated with a specific deployed version is not applicable or accurate.

* [**Analytics Engine**](https://developers.cloudflare.com/analytics/analytics-engine/): Local development sessions typically don't contribute data directly to production Analytics Engine.

* [**Hyperdrive**](https://developers.cloudflare.com/workers/wrangler/configuration/#hyperdrive): This is being actively worked on, but is currently unsupported.

* [**Rate Limiting**](https://developers.cloudflare.com/workers/runtime-apis/bindings/rate-limit/#configuration): Local development sessions typically should not share or affect rate limits of your deployed Workers. Rate limiting logic should be tested against local simulations.

Note

If you have use-cases for connecting to any of the remote resources above, please [open a feature request](https://github.com/cloudflare/workers-sdk/issues) in our [`workers-sdk` repository](https://github.com/cloudflare/workers-sdk).

#### Using remote resources with Durable Objects and Workflows

While Durable Object and Workflow bindings cannot currently be remote, you can still use them during local development and have them interact with remote resources.

There are two recommended patterns for this:

* **Local Durable Objects/Workflows with remote bindings:**

When you enable remote bindings in your [Wrangler configuration](https://developers.cloudflare.com/workers/wrangler/configuration), your locally running Durable Objects and Workflows can access remote resources. This allows such bindings, although run locally, to interact with remote resources during local development.

* **Accessing remote Durable Objects/Workflows via service bindings:**

To interact with remote Durable Object or Workflow instances, deploy a Worker that defines those. Then, in your local Worker, configure a remote [service binding](https://developers.cloudflare.com/workers/runtime-apis/bindings/service-bindings/) pointing to the deployed Worker. Your local Worker will be then able to interact with the remote deployed Worker, which in turn can communicate with the remote Durable Objects/Workflows. Using this method, you can create a communication channel via the remote service binding, effectively using the deployed Worker as a proxy interface to the remote bindings during local development.

### Important Considerations

* **Data modification**: Operations (writes, deletes, updates) on bindings connected remotely will affect your actual data in the targeted Cloudflare resource (be it preview or production).

* **Billing**: Interactions with remote Cloudflare services through these connections will incur standard operational costs for those services (such as KV operations, R2 storage/operations, AI requests, D1 usage).

* **Network latency**: Expect network latency for operations on these remotely connected bindings, as they involve communication over the internet.

### API

Wrangler provides programmatic utilities to help tooling authors support remote binding connections when running Workers code with [Miniflare](https://developers.cloudflare.com/workers/testing/miniflare/).

**Key APIs include:**

* [`startRemoteProxySession`](#startRemoteProxySession): Starts a proxy session that allows interaction with remote bindings.

* [`unstable_convertConfigBindingsToStartWorkerBindings`](#unstable_convertconfigbindingstostartworkerbindings): Utility for converting binding definitions.

* [`experimental_maybeStartOrUpdateProxySession`](#experimental_maybestartorupdatemixedmodesession): Convenience function to easily start or update a proxy session.

#### `startRemoteProxySession`

This function starts a proxy session for a given set of bindings. It accepts options to control session behavior, including an `auth` option with your Cloudflare account ID and API token for remote binding access.

It returns an object with:

* `ready` Promise\: Resolves when the session is ready.

* `dispose` () => Promise\: Stops the session.

* `updateBindings` (bindings: StartDevWorkerInput\['bindings']) => Promise\: Updates session bindings.

* `remoteProxyConnectionString` remoteProxyConnectionString: String to pass to Miniflare for remote binding access.

#### `unstable_convertConfigBindingsToStartWorkerBindings`

The `unstable_readConfig` utility returns an `Unstable_Config` object which includes the definition of the bindings included in the configuration file. These bindings definitions are however not directly compatible with `startRemoteProxySession`. It can be quite convenient to however read the binding declarations with `unstable_readConfig` and then pass them to `startRemoteProxySession`, so for this wrangler exposes `unstable_convertConfigBindingsToStartWorkerBindings` which is a simple utility to convert the bindings in an `Unstable_Config` object into a structure that can be passed to `startRemoteProxySession`.

Note

This type conversion is temporary. In the future, the types will be unified so you can pass the config object directly to `startRemoteProxySession`.

#### `maybeStartOrUpdateRemoteProxySession`

This wrapper simplifies proxy session management. It takes:

* An object that contains either:

* the path to a Wrangler configuration and a potential target environment

* the name of the Worker and the bindings it is using

* The current proxy session details (this parameter can be set to `null` or not being provided if none).

* Potentially the auth data to use for the remote proxy session.

It returns an object with the proxy session details if started or updated, or `null` if no proxy session is needed.

The function:

* Based on the first argument prepares the input arguments for the proxy session.

* If there are no remote bindings to be used (nor a pre-existing proxy session) it returns null, signaling that no proxy session is needed.

* If the details of an existing proxy session have been provided it updates the proxy session accordingly.

* Otherwise if starts a new proxy session.

* Returns the proxy session details (that can later be passed as the second argument to `maybeStartOrUpdateRemoteProxySession`).

#### Example

Here's a basic example of using Miniflare with `maybeStartOrUpdateRemoteProxySession` to provide a local dev session with remote bindings. This example uses a single hardcoded KV binding.

* JavaScript

```js

import { Miniflare, MiniflareOptions } from "miniflare";

import { maybeStartOrUpdateRemoteProxySession } from "wrangler";

let mf;

let remoteProxySessionDetails = null;

async function startOrUpdateDevSession() {

remoteProxySessionDetails = await maybeStartOrUpdateRemoteProxySession(

{

bindings: {

MY_KV: {

type: "kv_namespace",

id: "kv-id",

remote: true,

},

},

},

remoteProxySessionDetails,

);

const miniflareOptions = {

scriptPath: "./worker.js",

kvNamespaces: {

MY_KV: {

id: "kv-id",

remoteProxyConnectionString:

remoteProxySessionDetails?.session.remoteProxyConnectionString,

},

},

};

if (!mf) {

mf = new Miniflare(miniflareOptions);

} else {

mf.setOptions(miniflareOptions);

}

}

// ... tool logic that invokes `startOrUpdateDevSession()` ...

// ... once the dev session is no longer needed run

// `remoteProxySessionDetails?.session.dispose()`

```

* TypeScript

```ts

import { Miniflare, MiniflareOptions } from "miniflare";

import { maybeStartOrUpdateRemoteProxySession } from "wrangler";

let mf: Miniflare | null;

let remoteProxySessionDetails: Awaited<

ReturnType

> | null = null;

async function startOrUpdateDevSession() {

remoteProxySessionDetails = await maybeStartOrUpdateRemoteProxySession(

{

bindings: {

MY_KV: {

type: "kv_namespace",

id: "kv-id",

remote: true,

},

},

},

remoteProxySessionDetails,

);

const miniflareOptions: MiniflareOptions = {

scriptPath: "./worker.js",

kvNamespaces: {

MY_KV: {

id: "kv-id",

remoteProxyConnectionString:

remoteProxySessionDetails?.session.remoteProxyConnectionString,

},

},

};

if (!mf) {

mf = new Miniflare(miniflareOptions);

} else {

mf.setOptions(miniflareOptions);

}

}

// ... tool logic that invokes `startOrUpdateDevSession()` ...

// ... once the dev session is no longer needed run

// `remoteProxySessionDetails?.session.dispose()`

```

## `wrangler dev --remote` (Legacy)

Separate from Miniflare-powered local development, Wrangler also offers a fully remote development mode via [`wrangler dev --remote`](https://developers.cloudflare.com/workers/wrangler/commands/#dev). Remote development is [**not** supported in the Vite plugin](https://developers.cloudflare.com/workers/development-testing/wrangler-vs-vite/).

* npm

```sh

npx wrangler dev --remote

```

* yarn

```sh

yarn wrangler dev --remote

```

* pnpm

```sh

pnpm wrangler dev --remote

```

During **remote development**, all of your Worker code is uploaded to a temporary preview environment on Cloudflare's infrastructure, and changes to your code are automatically uploaded as you save.

When using remote development, all bindings automatically connect to their remote resources. Unlike local development, you cannot configure bindings to use local simulations - they will always use the deployed resources on Cloudflare's network.

### When to use Remote development

* For most development tasks, the most efficient and productive experience will be local development along with [remote bindings](https://developers.cloudflare.com/workers/development-testing/#remote-bindings) when needed.

* You may want to use `wrangler dev --remote` for testing features or behaviors that are highly specific to Cloudflare's network and cannot be adequately simulated locally or tested via remote bindings.

### Considerations

* Iteration is significantly slower than local development due to the upload/deployment step for each change.

### Limitations